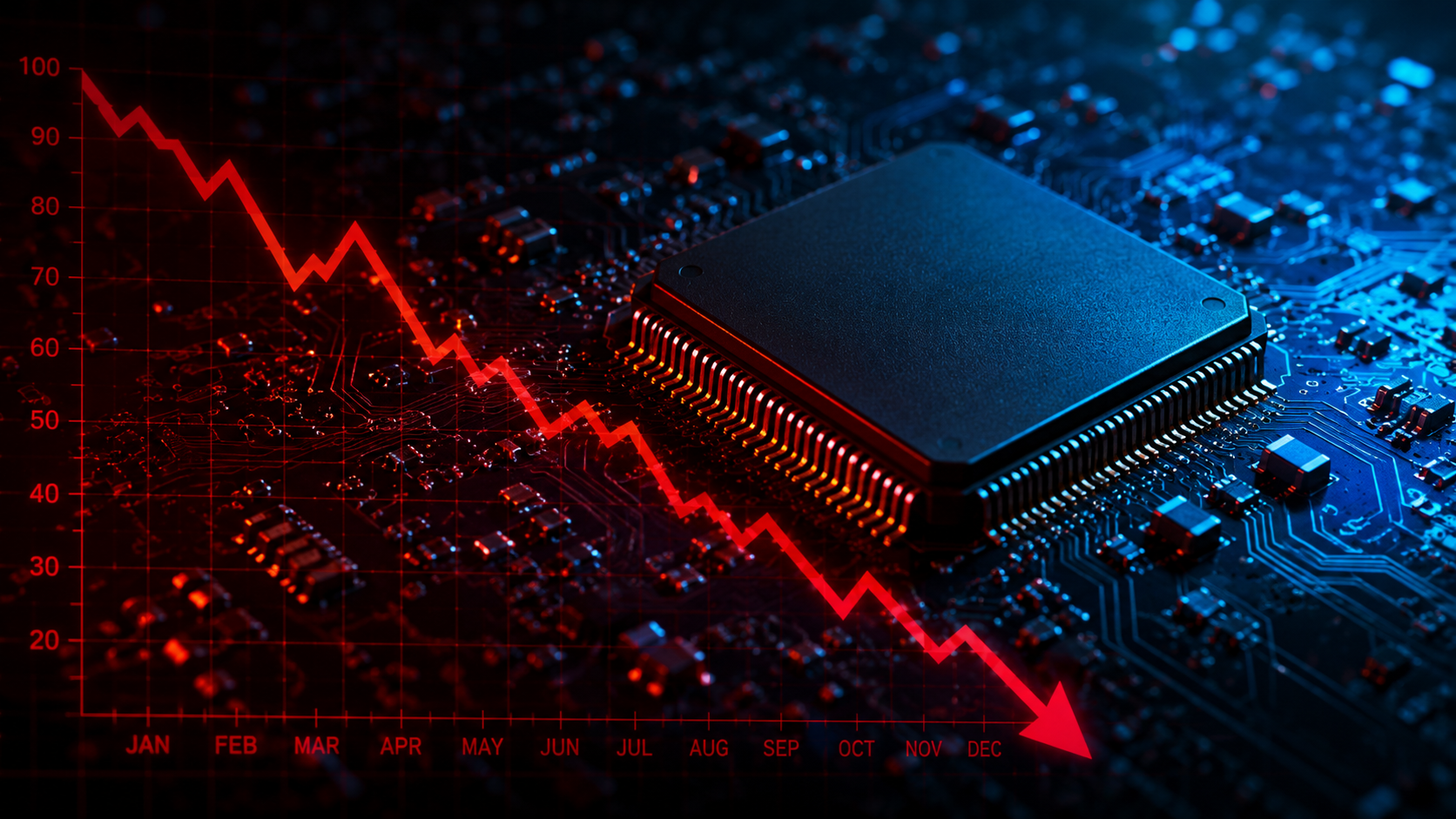

When Quantum Computing Inc. (Nasdaq: QUBT) announced in December 2025 that it would acquire Luminar Semiconductor for $110 million in cash from a bankrupt parent company, the market’s immediate reaction was a 7% single-day drop. The deal looked expensive, the target was emerging from a Chapter 11 process, and questions about whether a microcap quantum computing company could absorb an acquisition of that scale were entirely legitimate.

Four months later, the first full quarter of post-acquisition results are on the table, and the numbers tell a different story than the initial skepticism suggested.

What QUBT Actually Bought

Luminar Semiconductor was a wholly owned subsidiary of Luminar Technologies, the lidar company that filed for Chapter 11 bankruptcy concurrently with the sale announcement. Critically, Luminar Semiconductor itself was not a debtor in the bankruptcy. It was operating normally as a subsidiary and continued doing so through the court-supervised Section 363 sale process, which QUBT won as the stalking horse bidder. The deal closed February 2, 2026.

What transferred to QUBT was a portfolio of established photonic technology businesses including Black Forest Engineering, Optogration, Freedom Photonics, and EM4 — collectively representing a mature set of capabilities in lasers, photodetectors, optical packaging, and manufacturing. These are not experimental technologies. They have existing commercial customers in defense, sensing, and optical communications, generating real revenue before a single quantum application is layered on top.

The strategic logic was vertical integration. QUBT operates a thin-film lithium niobate foundry in Tempe, Arizona, producing photonic chips that form the hardware foundation for its quantum systems. Luminar Semiconductor’s components are direct building blocks on that technology roadmap. By acquiring the supplier rather than remaining dependent on it, QUBT gained control of its supply chain, expanded its engineering depth, and added an established revenue base in a single transaction.

The Post-Acquisition Numbers

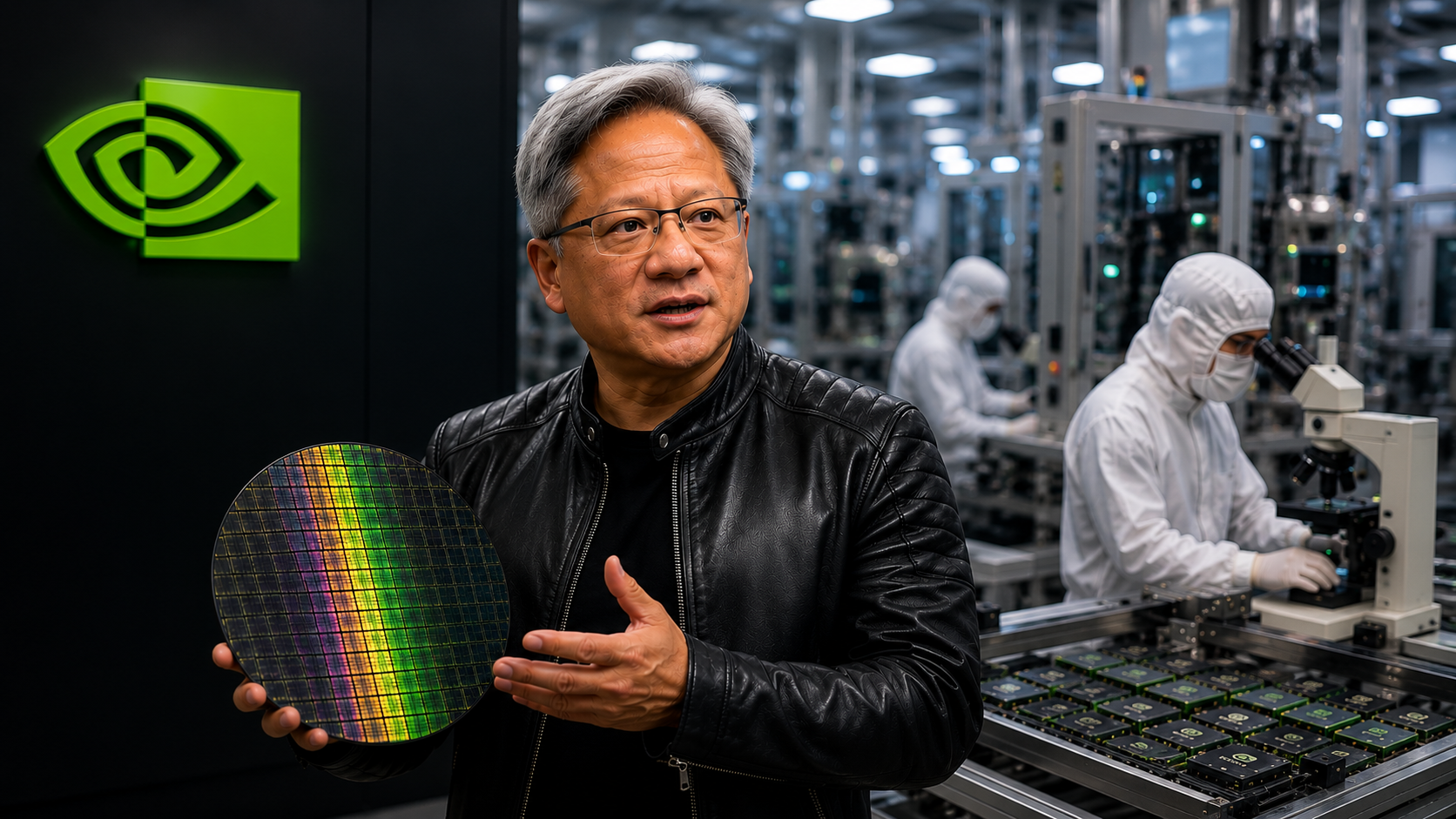

Q1 2026 revenue came in at $3.7 million, surging from near zero in the prior year period and significantly outpacing analyst consensus estimates. The net loss narrowed to $4.1 million, or $0.02 per share, better than expected. Total assets at March 31 stood at approximately $1.6 billion, supported by a cash position of roughly $1.4 billion — a substantial liquidity cushion for a company of this size and stage. The stock gained 7% on earnings day and has advanced nearly 30% over the past month. Six analysts currently carry Buy ratings on the stock with an average price target of $17.83, implying approximately 49% upside from current levels.

The Broader Context

The acquisition does not exist in isolation. Two weeks ago, the Trump administration announced $2 billion in equity investments across nine domestic quantum computing companies under the CHIPS and Science Act framework — a commitment that signals the federal government views quantum computing as a strategic national priority rather than a speculative technology bet. While QUBT was not among the direct recipients in that announcement, the government validation of the sector broadly benefits every company operating in the quantum computing ecosystem.

QUBT’s vertical integration strategy positions it as one of the few quantum companies attempting to control both the photonic hardware and the quantum application stack simultaneously, a differentiated approach in a sector where most competitors rely on third-party component suppliers.

The Risk Profile

The honest assessment includes the other side of the ledger. Earnings are projected to decline significantly on a per-share basis as the company scales operations and absorbs integration costs. The stock trades at extreme price-to-sales multiples relative to current revenue. Cash burn remains a structural feature of the business at this stage, and dilution risk through future capital raises is a real variable. These are not edge cases — they are the central risks any investor in early-stage quantum computing needs to underwrite.

What has changed since the December acquisition announcement is that the revenue baseline is now measurably higher, the integration appears to be proceeding on track, and the government has put $2 billion of validation behind the sector QUBT is building into.