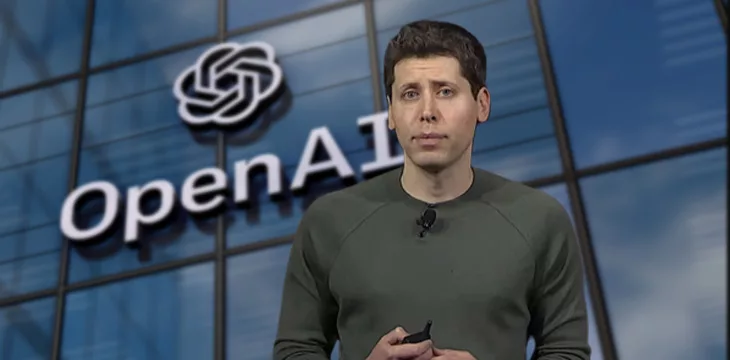

Humanoid robotics startup Figure AI recently closed a massive $675 million funding round, providing a glimpse into the future of automation. The round drew investment from major tech names like Jeff Bezos, Nvidia, Microsoft, and OpenAI. It highlights the enormous potential in advanced robotics for investors focused on high-growth emerging technologies.

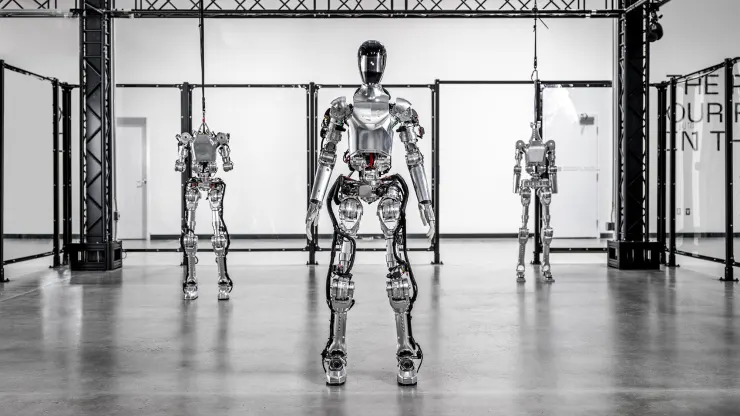

Figure AI is developing a human-shaped robot called Figure 01 designed for commercial use. With lifelike appearance and motion, Figure 01 is targeting deployment in industries struggling with labor shortages like manufacturing, logistics, and warehousing. This could automate dangerous and repetitive jobs to boost productivity.

The multi-hundred-million-dollar funding round led by prominent tech investors signals confidence in Figure AI’s ambitious goals. We are likely still years away from advanced humanoid robots becoming mainstream. But the capital injection provides Figure AI fuel to push the envelope on development of robotic capabilities.

For small cap investors, early stage robotics companies like Figure AI represent a high-risk, high-reward proposition. The total addressable market for humanoid robots could reach $38 billion by 2035 per Goldman Sachs forecasts. That’s up from virtually zero today.

Figure AI is not alone in pursuing this opportunity. Deep-pocketed tech giants like Amazon and Tesla have their own humanoid robot initiatives. Competition will be fierce. But Figure AI’s partnerships with AI leaders OpenAI and Microsoft provide an edge. Its tech could set it apart if successfully commercialized.

The catch is that costs remain extremely high. Figure 01 robot units likely run from $30,000 to $150,000 each for now. Hardware and production expenses will need to come down significantly for mass adoption. But rapid advances in AI, cloud computing, and cheaper components should drive down costs over time.

For small cap investors with patience and high risk tolerance, Figure AI represents an early-stage bet on transformative innovation. It offers exposure to a potential multi-billion dollar humanoid robotics industry of the future.

While commercial viability remains uncertain, the technology promise is immense. Companies that can crack cost and production barriers first will be positioned to dominate the market. Figure AI now has ample capital to pursue that goal.

Its partnerships with AI and cloud infrastructure leaders provide unique advantages. And high-profile backers like Bezos and Nvidia give Figure AI added legitimacy versus competitors.

Investing in pre-revenue robotics startups is not for the faint of heart. Expect setbacks and delays on the long road to commercialization. But the total addressable market makes it a worthwhile speculative bet for those focused on investing in emerging tech.

Figure AI faces risks typical of any early stage hardware startup. Its humanoid robotics technology could fail or a competitor could bring superior products to market faster. Execution challenges abound.

But with its new war chest and high-powered partnerships, Figure AI has a fighting chance to be a leader if and when humanoid robots transition from R&D to mainstream adoption. For small cap investors, it represents the type of high-upside moonshot that could pay off big if the stars align.